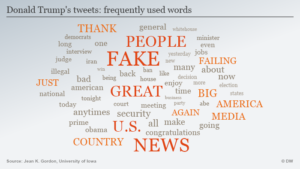

The quick answer to the question in the title is is yes, but maybe not for long. This week there was an interesting podcast on US public radio (KERA’s Think), AI could drive most languages to extinction, which features a conversation with Matteo Wong, a staff writer for The Atlantic, who published this spring a piece on the same topic, The AI Revolution Is Crushing Thousands of Languages. Both the podcast and the article deal with the troublesome issue of the poor representation in AI of low-resource languages, i.e. languages which do not have a large written record, and which may be under-represented online. As a result, those language, in contrast to high-resource languages like English, Chinese, Spanish, French, or Japanese (and other European and Western languages), do not represent much of the training data for generative AI systems like ChatGPT. As a result, AI systems have little knowledge of those languages and therefore are likely to perform poorly in areas such as translation, providing accurate information, or even generating coherent texts.

The quick answer to the question in the title is is yes, but maybe not for long. This week there was an interesting podcast on US public radio (KERA’s Think), AI could drive most languages to extinction, which features a conversation with Matteo Wong, a staff writer for The Atlantic, who published this spring a piece on the same topic, The AI Revolution Is Crushing Thousands of Languages. Both the podcast and the article deal with the troublesome issue of the poor representation in AI of low-resource languages, i.e. languages which do not have a large written record, and which may be under-represented online. As a result, those language, in contrast to high-resource languages like English, Chinese, Spanish, French, or Japanese (and other European and Western languages), do not represent much of the training data for generative AI systems like ChatGPT. As a result, AI systems have little knowledge of those languages and therefore are likely to perform poorly in areas such as translation, providing accurate information, or even generating coherent texts.

Wong gives the example of a linguist from Benin asking ChatGPT to provide a response in Fon, a language of the Atlantic-Congo family spoken by millions in Benin and neighboring countries. The AI response: it was not able to comply, as Fon was “a fictional language”. An additional problem for low-resource languages is that the texts that do appear online may not be genuinely produced by speakers of the language, but be machine-translated, and therefore potentially of questionable quality.

This means that AI, the increasingly important source of information in today’s world, will be unavailable to those who do not know English or another large-resource language. Wong cites David Adelani, a DeepMind research fellow at University College London, pointing out that “even when AI models are able to process low-resource languages, the programs require more memory and computational power to do so, and thus become significantly more expensive to run—meaning worse results at higher costs”. That means there is little incentive for AI companies like OpenAI, Meta, or Google to develop capabilities in languages like Fon.

The information about low-resource languages is not just linguistically deficient, but culturally problematic as well:

AI models might also be void of cultural nuance and context, no matter how grammatically adept they become. Such programs long translated “good morning” to a variation of “someone has died” in Yoruba, Adelani said, because the same Yoruba phrase can convey either meaning. Text translated from English has been used to generate training data for Indonesian, Vietnamese, and other languages spoken by hundreds of millions of people in Southeast Asia. As Holy Lovenia, a researcher at AI Singapore, the country’s program for AI research, told me, the resulting models know much more about hamburgers and Big Ben than local cuisines and landmarks.

The lack of support for most of the 7,000 world languages is evident from the fact that Google’s Gemini supports 35 languages and ChatGPT 50. As Wong notes, this is not just a practical problem for speakers of low-resource languages, in that the lack of support sends the message that those speakers’ languages are not valued. There is of course also the danger of languages lacking AI support will become less widely spoken, as they are perceived as not offering the personal and professional benefits of high-resource languages. Losing languages loses the human values associated with those language, for example, knowledge of the natural world tied to Indigenous languages, unique cultural values, traditional stories.

Wong points out that there are efforts to remedy this situation, such as Meta’s No Language Left Behind project. That initiative is developing open-source models for “high-quality translations directly between 200 languages—including low-resource languages like Asturian, Luganda, Urdu and more”. The Aya project is a global initiative led by non-profit Cohere For AI involving researchers in 119 countries seeking to develop a multilingual AI for 101 languages as an open resource. That system features human-curated annotations from fluent speakers in many languages. Masakhane is a grassroots organization whose mission is to strengthen and spur “in African languages, for Africans, by Africans”.

Let us hope that such initiatives can help bring AI to more languages and cultures. However, the power of the big AI systems is such that only if they commit to adding more diverse language data to their training corpus will AI truly become more fully multilingual and multicultural.

/arc-anglerfish-arc2-prod-mco.s3.amazonaws.com/public/W2O2I2FQZZF6DN7Y6Z4LVNQCDU.JPG)